Azure Scale Set Monitoring With Prometheus and Grafana

When running more and more machines it becomes impractical to check on each of them by logging in and going through the numbers yourself. This is especially true for a variable number of machines like in cloud scale sets.

So what can we do? Prometheus is a popular solution to collect and store metrics from your machines. You can then browse them either via its included web interface or third party apps like Grafana.

In this post we will look at a practical example of metric collection with Prometheus on Microsoft Azure scale sets. I assume that you already have an Azure deployment set up. If not, check out my post on Microsoft Azure VM deployment.

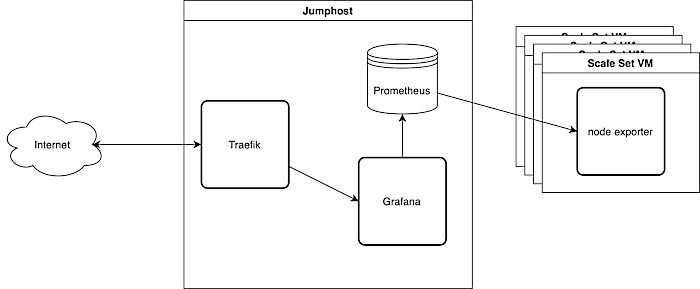

We will run Prometheus in a docker container on a jumphost VM utilizing the also present Traefik. I got a post about how to set up Traefik with Ansible on your jumphost if you need it. Prometheus will then fetch the metrics from a small exporter app on each of the Azure scale set VMs. Finally, we display the data with Grafana that also runs in a container on the jumphost.

Export Metrics from Scale Set VMs

Prometheus relies on other apps called ‘exporters’ to make metrics availabe for various sources. Prometheus can collect metrics on just about anything, as long as they are served in a common format. There are various exporters for metrics from different kinds of systems: Linux, MySQL, Jira, and many others. You can also write your own exporter. To get information about system utilization we will use a metrics exporter called ‘node_exporter’.

There is a ready made node_exporter package on Ubuntu that we will install on our image.

Prepare an Azure Image with Prometheus Node Exporter

Like in Monero Mining on Azure we use Packer to build and store an image on Microsoft Azure. Check out their tutorials page for further documentation. Our demo image will be rather minimal and do nothing except exporting metrics.

To build the image, just:

# Use "force" to overwrite an existing image |

If you need a newer Ubuntu base image you can get a list from the command line using Microsofts Azure tool:

az vm image list -l northcentralus -p Canonical --all -s 22_04 |

But beware: Packer 1.8.0 has SSH issues with Ubuntu 22.04. Ubuntu 21.10 works for me, 22.04 does not.

Start a Microsoft Azure Scale Set with Ansible

You can start an Azure Scale Set with just one ansible task if you already have a resource group, virtual network and subnet. I got a post on how to create your Azure virtual network and subnet.

|

Now you have some VMs running in the cloud, exporting metrics. Let’s collect that metrics.

Collecting Metrics with Prometheus

Prometheus will collect and store our metrics. We start a Prometheus container on the jumphost and provide a config file that specifies how to reach the scale set VMs. We use Traefik to make the web interface available from the outside. Traefik will read the labels of the docker container and act accordingly. Now you may wonder why we expose the data store to the internet. Well, it’s very useful for debugging. Prometheus exposes its own web interface with which you can browse the collected data and check on the status of the scrape jobs.

The ansible task simply copies the config and starts the container. We tell Traefik to put a basic auth in front of the web interface. It’s exposed to the web, after all.

We store our variables in the vars/main.yml as always, and the secrets in a vault file that we can encrypt later. This way we can still search for the variables while also be able to safely commit them to a repository.

We store the password for basic auth in the vault so that we can later use it in other recipies. But Traefik reads the password in Apache format. You can create a string like this:

htpasswd -n promuser |

In the Prometheus config file we specify the Microsoft Azure account data from where Prometheus shall collect metrics. Prometheus would then try to collect metrics from all machines in the subscription. If you want to narrow it down, you can provide a resource group identifier.

Ok, now prometheus will collect metrics from your VMs. Let’s prepare Grafana…

Provision Grafana Datasources

We could manually add the Prometheus container as a datasource in Grafana, but this is not the way. Instead we provision the Prometheus datasource when we set up Grafana. That won’t require huge changes. We just have to copy a configuration file to the right directory.

Our Grafana ansible task then sets up the config directories, copies the config files and starts the grafana container:

And this is the datasource file. It simply contains the Prometheus URL and auth information along with some preferences.

That’s it for Grafana. Of course you could also provision one or more dashboards but that’s probably out of scope for this post.

Finishing Touches

There are two files missing from the description above. Also, we can improve the display of metrics in Grafana by relabeling the metrics.

Provision the Jumphost

I assume you will integrate the Prometheus and Grafana roles into your own playbooks. If you don’t have one already, here is mine. It is based on my previous posts on Microsoft Azure VM deployment and how to set up Traefik with Ansible. You might want to tune it to fit your needs.

Ansible Hosts File

In our scripts we referenced the variable ansible_host several times. We provide that value in our hosts.yml file. The hosts file contains a list of the hosts that we want to configure. In our case, that’s just the jumphost. We do not communicate directly with the scale set Vms, that’s what we have Prometheus for.

Add Azure specific labels

Right now prometheus will store the scale set VM metrics just as they come in. Unfortunately the standard values do not include Azure specific information like machine name, resource group name, etc… But Prometheus can add these values to the metrics while scraping them from the VMs.

The following example shows how to add the machine name to each metric scraped from a scale set vm with the label azure_machine_name. Just change your Prometheus configuration file accordingly.

There are more labels available in the Prometheus documentation for azure_sd_config.

- job_name: "scalesetvms" |

Conclusion

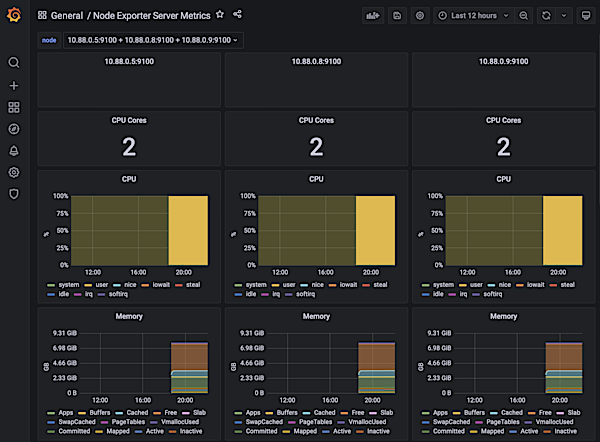

When everything is set up you should have a Grafana instance with a preconfigured datasource fetching from Prometheus. Here an example displaying three VMs running the Microsoft Editor using the Grafana dashboard 405.

Prometheus makes it easy to collect metrics from your Microsoft Azure scale set VMs. The configuration using azure_sd_config may seem a bit “magic”, but it works and adapts to when you add or remove VMs from the scale set.