Collecting logs in the cloud with Grafana Loki

In the good old days you had one server running your services. When something failed, you logged in via SSH and checked the corresponding log file. Today, most of the time no one server is running all your services. So the log files are distributed over multiple machines and ways of accessing them. From journald, docker logs, over syslog to simple files there are just too many options to check the logs efficiently, especially if you use scale sets on Azure or something equivalent to dynamically adjust the number of VMs to the workload.

Sometimes one solves this problem by introducing an Elasticsearch, Logstash and Kibana (ELK) stack that gathers the logs and makes them searchable. That’s a nice solution, albeit a resource intensive one.

We want to look at a more lightweight alternative: The log aggregator Grafana Loki. Like Elasticsearch it stores logs that are gathered by log shippers like Promtail. You can then display the logs using Grafana.

But unlike Elasticsearch Loki is more lightweight. That’s mostly because it omits the main feature of Elasticsearch: search. Instead, and much more like Prometheus, Loki stores log lines annotated with tags that you can later filter on. So there is no real-time search on log text.

The upside is low hardware requirements. I myself run Loki comfortably on a Raspi 3B where it collects logs from several systems using below 1% CPU at all times. An ELK stack would have serious problems even running on the Raspi 3B, mostly due to the 1GB of system memory.

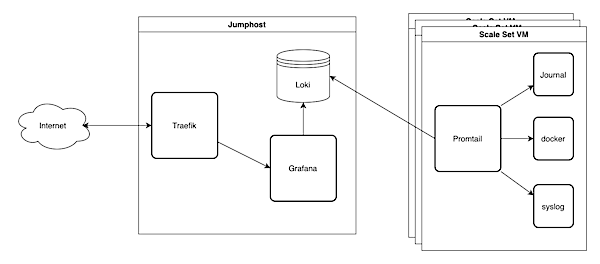

Our example setup runs on Microsoft Azure. A Jumphost runs Loki in a Docker container as well as a Grafana container. We will later visualize our logs with Grafana. We use a Traefik container as reverse proxy that also takes care of SSL. I will not describe the Traefik setup here, but there is a blog post on how to set up Traefik with Ansible. We will use Ansible to deploy the setup. I assume that you have a working development setup with Ansible and Azure. If you need some guidance, there is a post on Microsoft Azure VM deployment with Ansible.

To get some logs we will start an Azure scale set. On each scale set VM a logshipper called Promtail will collect logs from journald and send them to Loki on the jumphost VM.

Collecting Metrics with Grafana Loki

Grafana Loki stores logs and makes them available for visualization. So we don’t really have to configure anything in Loki, just make it available for our VMs and Grafana to access. Our webserver Traefik then adds SSL and basic auth based on the configuration that we put into the labels of the Loki Docker container.

We use a near default config file with just the host filled.

We store our variables in the vars/main.yml as always, and the secrets in a vault file that we can encrypt later. This way we can still search for the variables while also be able to safely commit them to a repository.

We store the password for basic auth in the vault so that we can later use it in other recipies. But Traefik reads the password in Apache format. You can create a string like this:

htpasswd -n lokiuser |

Our vault file then looks like this:

Export Logs from Scale Set VMs

Loki relies on other apps called ‘clients’ to collect logs from various sources and push them to Loki via an HTTP API. There are several clients available. In our example we will use Promtail. Promtail can read logs from various sources like files, Kubernetes, Docker, etc….

Prepare an Azure Image with Grafana Promtail

Like in Monero Mining on Azure we use Packer to build and store an image on Microsoft Azure. Check out their tutorials page for further documentation. Our demo image will be rather minimal and do nothing except collecting and exporting logs.

There is no Promtail package in the Ubuntu sources so we manually download Promtail and install the binary including our config file and systemd unit file.

In the config we specify the URL to the Loki container. Promtail will then push the collected logs through the Loki HTTP API. Traefik protects Loki with basic auth so we have to fill the username and password in the Promtail config, too. Of course Promtail can’t access the Ansible vault so we will fill these values when building the image using a separate Ansible playbook.

However, Promtail can use environment variables in its config file and we use that here to add a tag to the labels with the $HOSTNAME of the system running promtail. We can use it later to identify the machine.

Unfortunately systemd will not provide the environment variable $HOSTNAME in the default configuration. It should do that and you should also be able to define further environment variables for your service in the systemd unit file but none of these ways worked for me. As a last resort I wrapped the promtail call in a bash call because bash will evaluate hostname correctly.

As mentioned above we need Ansible to render a config file for Promtail with the authentication data from the Ansible vaults before building the image. So here is a short playbook that does exactly that. Note that you have to manually overwrite the ansible_host variable. Otherwise that would resolve to localhost because that’s where you render the config template.

Start a Microsoft Azure Scale Set with Ansible

You can start an Azure Scale Set with just one ansible task if you already have a resource group, virtual network and subnet. I got a post on how to create your Azure virtual network and subnet.

|

Now you have some VMs running in the cloud, exporting logs to Loki.

Provision Grafana Datasources

We could manually add the Loki container as a datasource in Grafana, but this is not the way. Instead we provision the Loki datasource when we set up Grafana. That won’t require huge changes. We just have to copy a configuration file to the right directory.

Our Grafana ansible task then sets up the config directories, copies the config files and starts the grafana container:

And this is the datasource file. It simply contains the Loki URL and auth information along with some preferences.

That’s it for Grafana. Of course you could also provision one or more dashboards but that’s probably out of scope for this post.

Finishing Touches

Provision the Jumphost

I assume you will integrate the Prometheus and Grafana roles into your own playbooks. If you don’t have one already, here is mine. It is based on my previous posts on Microsoft Azure VM deployment and how to set up Traefik with Ansible. You might want to tune it to fit your needs.

Ansible Hosts File

In our scripts we referenced the variable ansible_host several times. We provide that value in our hosts.yml file. The hosts file contains a list of the hosts that we want to configure. In our case, that’s just the jumphost. We do not communicate directly with the scale set Vms, that’s what we have Prometheus for.

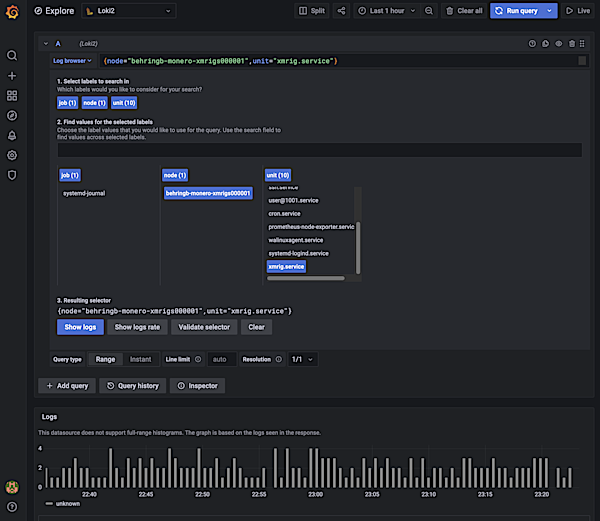

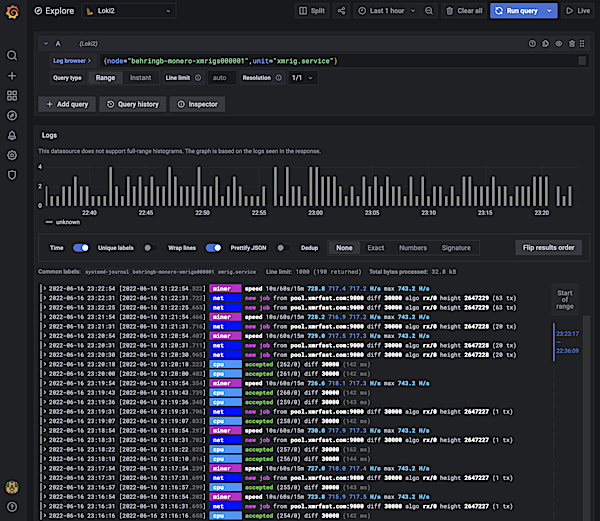

Digging through logs in Grafana

To dig through the logs open Grafana and select the Explore section on the left menu bar. Then select Loki as datasource at the top and click “Log Browser” in the first query. Now you should see a list of tags like job, hostname and unit. Select the values that match the logs that you are looking for and click “Show Logs”. Now you can scroll through the collected logs. Adjust your timeframe in the upper right corner.

Conclusion

Grafana Loki is a lightweight alternative to other log aggregation stacks like ELK. Loki does not offer full text search like Elasticsearch but you can filter your logs by tags. I bave been using Loki for a while now on a Raspi 3B without any problems. I don’t miss the more advanced features of Elasticsearch and appreciate that I can run it 24/7 on very weak hardware. If you can live without full text search or other Elasticsearch features, I guess it’s worth trying out.